Updating AI Agents safely in production

Giselle van Dongen, Francesco Guardiani

Making AI agents reliable gets a lot of attention: evals, LLM-as-a-judge, guardrails, prompt engineering. Those topics are important. But there's a category of unreliability that hasn't gotten the same attention: updating agents without corrupting ongoing executions.

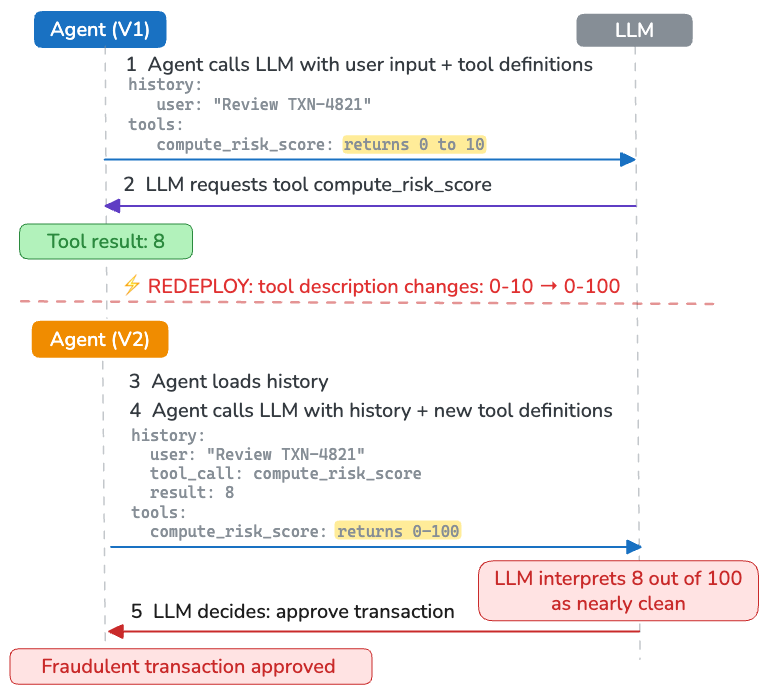

Consider a fraud detection agent with a compute_risk_score tool that returns a score from 0 to 10:

@function_tool

async def compute_risk_score(transaction: Transaction) -> float:

"""Returns a risk score from 0 to 10 where higher means more suspicious."""

return await fraud_model.score(transaction)A suspicious transaction comes in. The agent calls the tool, which returns 8 , so highly suspicious. Before the agent sends this result back to the LLM, the service is redeployed. The new version has a more precise risk calculation that takes more signals into account and widens the scale to 0–100.

@function_tool

async def compute_risk_score(transaction: Transaction) -> float:

"""Returns a risk score from 0 to 100 where higher means more suspicious."""

return await improved_fraud_model.score(transaction)When the agent resumes (via retries or a message queue), it retrieves its execution history from the session store, including the tool result 8 . It calls the LLM to decide what to do next, and supplies the execution history together with the tool descriptions. But the tool description now says "0 to 100," and the LLM interprets 8 out of 100 as nearly clean, so it tells the agent to let the transaction go through.

The agent doesn’t crash or print a warning, it just silently makes the wrong decision. Silent corruption is much harder to detect than a crash, especially when the decision still looks plausible.

This post shows you how to safely evolve your agents with immutable deployments and pinned executions, and how to implement this in practice. With this in place, versioning becomes an infrastructure property, instead of something you need to worry about at the application level.

The danger of swapping out code from under an agent

The intro example included a tool change, but anything the LLM uses to interpret execution history can cause the same kind of silent failure:

- Schema description changes: agents, tools and guardrails can have input/output schemas with descriptions that the LLM uses to interpret results or structure its output

- Prompts and instructions changes: the agent reasons about its own history differently

- Guardrails changes: a human approval granted under one policy gets treated as an approval under a different one

- Tool removals: the history references tool calls that no longer exist and the agent will likely ignore them or hallucinate about their meaning

On top of this, agents still face the classic versioning problems of any long-running process. If your application calls three agents in a pre-defined pipeline (planning → execution → review) and you redeploy with a new validation step added at the beginning, then an execution that's already midway through would find itself in a pipeline that doesn't match the path it took to get there.

Why this is hard to solve

Code contains the manual to interpret execution history

The fraud example is one instance of a general problem: your code is the manual the LLM uses to interpret its own execution history: tool descriptions, prompts, schemas, model config,... The branching happens inside the model's reasoning, not at a point in the code you can gate.

The execution history lives in a database, but most of the information needed to interpret it lives in docstrings and comments in your code. They get compiled into JSON and sent to the LLM as a manual on how to interpret the pre-existing execution history and understand which actions it can take next. Both are tightly coupled parts of the agent's state, but they're versioned and stored separately.

Agents are long-running

Agents have unpredictable execution times that can take up to weeks when waiting on human approvals or doing extensive research. This makes it inevitable that executions will overlap with version upgrades.

Resumability complicates versioning

Several agent SDKs (LangChain, ADK, …) let you resume agents halfway through their execution, to help with human approvals or long-running agents. They usually use the execution history in the session state as the basis for resumption. If in the meantime a new version of the agent was deployed, then this leads to incompatibility.

Why typical approaches don’t work well

Stateful applications usually solve versioning by embedding version markers in state and adding branching logic to the code. This doesn't work well for agents, because they aren't fixed graphs where you can place version gates at known branch points. Every iteration writes its own next step, so you'd quickly end up with version branches on top of version branches. This might work for fixing deserialization of tool inputs/outputs across versions, but it gets much harder to maintain for tool descriptions, prompts, schemas, etc.

Another suggestion is to keep tasks short-running, so they are less likely to overlap with redeployments. But even if you run each iteration of the agent loop as a separate task, the execution history is still part of the state and still needs to be interpretable across versions. And most agent SDKs are not designed to work this way; you would essentially be reimplementing the agent loop yourself.

In practice, the simplest solution is to move versioning out of application code entirely and enforce it at the infrastructure layer.

Two rules for safe agent versioning

You can eliminate these issues by enforcing two rules at the infrastructure layer:

- Immutable deployments: Every deployment is a complete, versioned snapshot of the agent: code, prompts, tool definitions, schemas, guardrails, and model configuration. Once deployed, it never changes. New versions are deployed alongside old ones, not on top of them.

- Pinning executions to deployments: Every agent execution continues on the same version it started with. Every retry, resumption, and callback returns to that same version, while new requests route to the latest version.

Together, these rules eliminate mid-execution version mismatches and give you version-aware execution.

How Restate makes this work

Restate is a lightweight durable execution runtime. It makes sure that requests get processed to completion by taking care of retries and recovery.

When you deploy a version of your code, you register its unique endpoint with Restate. This registered endpoint is called a deployment.

restate deployments register http://agent-v2/When you register a new deployment, Restate automatically routes new requests to the latest version while existing invocations continue on their original deployment. This happens automatically, without any migration logic, version gates, or branching.

💡 Restate makes versioning a property of the infrastructure rather than a problem you solve in application code.

Durable execution as foundation

Once a request is pinned to a version, it is driven to completion via Durable Execution. Restate records every non-deterministic step the agent takes in a journal: LLM calls, API requests within tools, generated timestamps and IDs. When an execution gets interrupted, by a crash, a network failure, or a week-long wait for human approval, it deterministically replays the journal and picks up exactly where it left off.

Each execution is a durable process whose state and history are persisted and version-pinned. When an execution resumes, it replays the journal and continues from where it left off.

Failing loudly on mismatches

If something about the execution environment has changed in a way that conflicts with the journal, the mismatch will be detected during replay and will cause the execution to fail loudly, rather than silently continuing. This turns what would be a silent corruption into an explicit signal that something needs attention and can be fixed (see below).

Serverless functions for nearly free, immutable deployments

Function-as-a-Service platforms are a natural fit for this model, because they support immutable deployments out-of-the-box. When you publish an AWS Lambda version, it creates an immutable snapshot with a unique ARN. When you deploy to Vercel, each deployment gets a unique URL. These endpoints never change.

The cost advantage and reduced operational overhead is dramatically lower than maintaining parallel long-running deployments on reserved infrastructure. A published Lambda version is just a stored artifact. It doesn't cost anything to keep it around. Old versions scale to zero as soon as no executions need them. And a human approval that comes in a month later just wakes the pinned version back up through durable execution.

That said, keeping old versions around isn't zero-cost in every sense. You still need to think about dependency updates, security patches for long-lived versions, and cold start latency for rarely invoked endpoints. This is where the production escape hatches described below come in nicely.

The deployment flow can be fully automated: a git commit to main triggers a build, deploys a new immutable endpoint, and registers it with Restate. Here's what that looks like with Vercel and GitHub Actions:

name: Register to Restate

on:

repository_dispatch:

types:

- 'vercel.deployment.promoted'

jobs:

register-to-restate:

runs-on: ubuntu-latest

steps:

- name: Register Restate deployment

run: |

npx -y @restatedev/restate deployment register \

${{ github.event.client_payload.url }}/restateHave a look at the documentation to set this up yourself for Vercel, Cloudflare Workers, AWS Lambda, or Deno Deploy.

Together with Restate Cloud, this gives you production-ready infrastructure without managing any of it.

Automated versioning on Kubernetes

On serverless platforms this model works naturally. On reserved infrastructure like Kubernetes, keeping multiple versions running has a real cost. Having multiple versions running in parallel consumes resources and adds operational overhead.

Restate's Kubernetes operator helps with this by managing the lifecycle: deploying new versions, draining traffic from old ones, and decommissioning them once they're idle.

Short-lived executions drain naturally within minutes or hours and can be cleaned up quickly. For executions that run for weeks or months, for example, an agent waiting on a long procurement approval chain, you can use Restate's escape hatches (see below) to move the invocation to a new deployment (cancel-rollback-restart) when maintaining the old one is no longer practical.

Knowing what's running and where

Because every deployment is registered and every invocation is pinned, Restate always knows what versions exist and what's executing on each one:

You can see which services are deployed where, what configuration they're running, and even how many invocations are in-flight on each version. This tells you whether it's safe to decommission an old deployment.

Because executions are pinned to deployments, every trace is also tied to a specific version of the agent. When you inspect a trace, you know exactly which tool definitions, prompts, and model configuration were active when those decisions were made.

Production escape hatches for when immutability is too rigid

Pinned invocations are the right default, but production workloads sometimes need more flexibility. Sometimes you need to override the pin:

- A critical bug in version 3 is causing an agent to make wrong decisions, and you need to move its in-flight executions to version 4.

- An execution has been stuck waiting on a third-party callback that will never come, and you want to cancel and restart it on the latest version.

- You're decommissioning infrastructure and need to migrate remaining executions off an old deployment.

Restate provides escape hatches for these situations: you can move ongoing invocations to new deployments, or cancel and restart them. These are deliberate, auditable operations, that you can carefully plan and manage, not something that happens silently on every deploy. Have a look at our blog post on invocation control for more details.

Putting it together

The end result is the following:

- You deploy a new version of your agent to a new, unique endpoint

- You register it with Restate

- New invocations automatically route to the latest version

- In-flight invocations continue on their pinned version through retries, sleeps, and interruptions

- Durable execution journals every non-deterministic step and replays on recovery

- If a mismatch is detected during replay, the system fails loudly

- You can inspect what's running where and decommission old versions when they're idle

- When production demands it, you have escape hatches to move or restart invocations

No version branching in application code, silent reinterpretation of execution history, or guessing which version produced a trace.

Try it out

Get started by checking out Restate’s Agent SDK integrations:

- Agent Quickstart

- Restate AI docs

- Restate Cloud for zero infrastructure management

Restate is open, free, and available at GitHub. Star the project if you like what we're doing and join our community on Discord or Slack