Resilient, observable agents with Restate and Langfuse

Restate Team

If you’ve been working on agents, you have probably experienced the same as many others. Running an agent as a demo is easy, running it in production is not.

Agents are built on top of non-deterministic LLMs, external APIs, and multi-step workflows. This makes it hard to make them behave reliably in real-world scenarios. Production systems need to consistently produce the right outputs, behave predictably across versions, and keep running through API timeouts, rate limits, and redeploys.

In this post, we show how Restate and Langfuse can help with this. Restate owns execution: retries, recovery, idempotency, and durable workflows. Langfuse owns observability and quality control: traces, evals, prompt versioning.

We'll walk you through how this works and how to set it up, using a running example.

Building a resilient agent with Restate

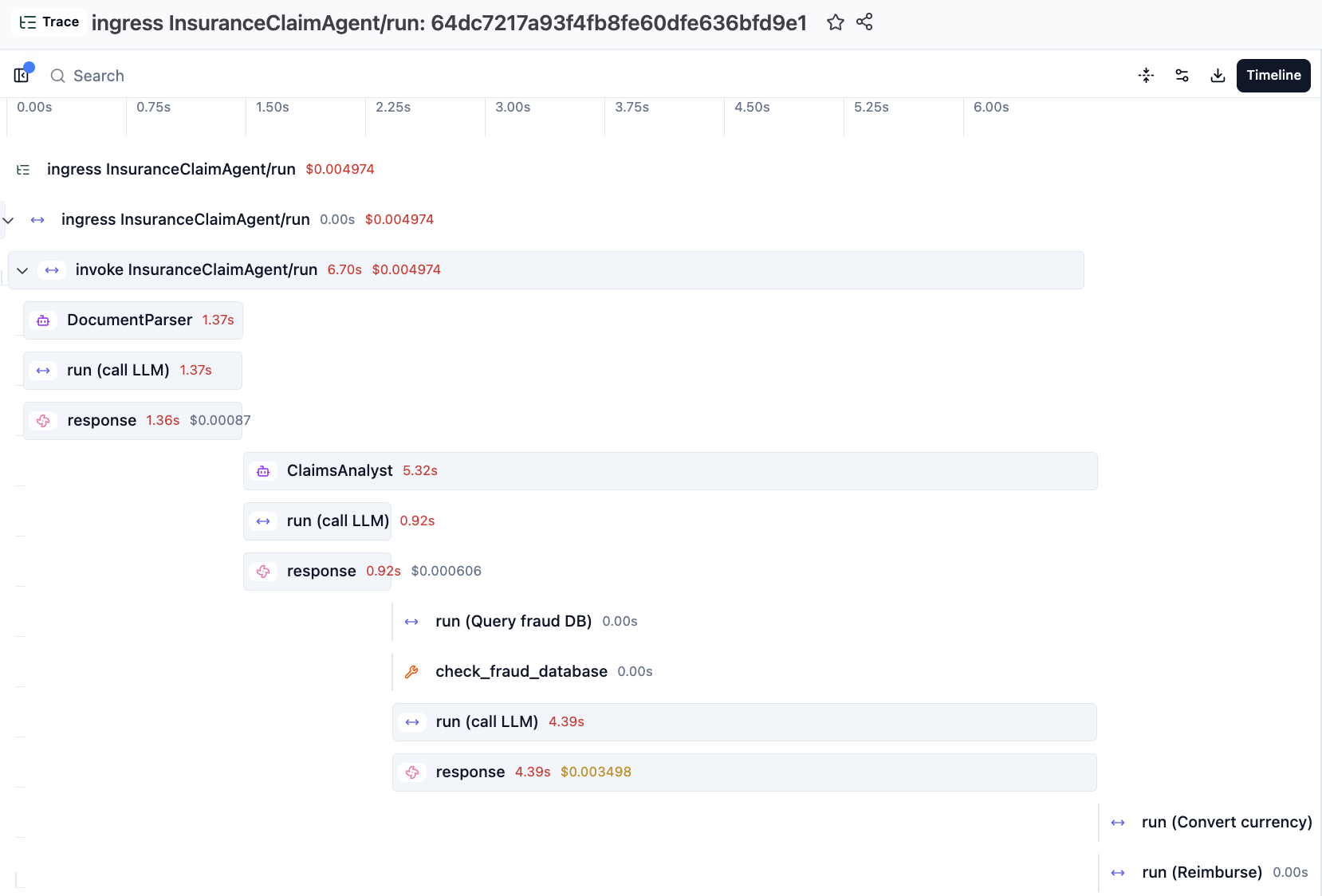

Imagine an insurance claim agent that parses a claim document, analyzes it, converts currency, and reimburses the claim. If something fails halfway through, you want it to retry and pick up where it left off.

Restate enables this by making every non-deterministic step durable. LLM calls, API calls, MCP calls, and tool interactions are recorded in a journal, allowing failed executions to be replayed and resumed safely.

Here's a four-step claim processor using Restate’s OpenAI Agent integration:

@claim_service.handler()

async def run(ctx: restate.Context, req: ClaimDocument) -> str:

# Step 1: Parse the claim document (Agentic step)

parsed = await DurableRunner.run(parse_agent, req.text)

claim: ClaimData = parsed.final_output

# Step 2: Analyze the claim (Agentic step)

response = await DurableRunner.run(analysis_agent, claim.model_dump_json())

assessment: ClaimAssessment = response.final_output

if not assessment.valid:

return "Claim rejected"

# Step 3: Convert currency (durable step, no LLM)

converted = await ctx.run_typed("Convert", convert_currency, amount=claim.amount)

# Step 4: Process reimbursement (durable step, no LLM)

await ctx.run_typed("Reimburse", reimburse, amount=converted)

return "Claim reimbursed"The agentic steps run through DurableRunner, while other workflow steps are wrapped in ctx.run for retries and result persistence. The result is a workflow that can recover from failures at any point without losing progress.

Adding Langfuse tracing

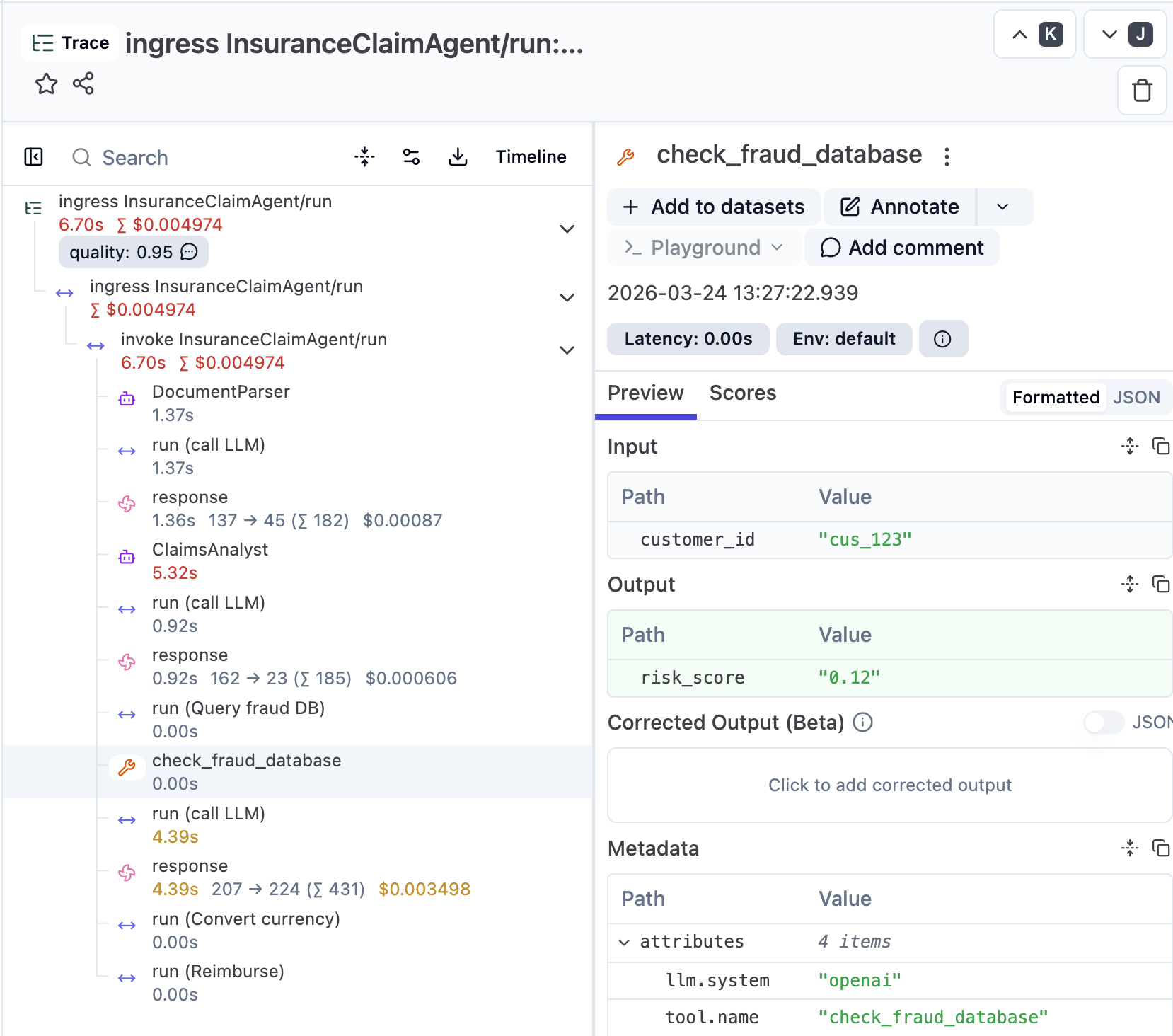

Our agent is now resilient to failures, but when a claim gets rejected unexpectedly, or a reimbursement amount looks wrong, you need to be able to understand what happened. This is where Langfuse comes in: it captures detailed traces of AI actions and metadata, giving you visibility into what happened inside each execution.

Langfuse records information such as agent decisions, tool calls, model configuration, token usage, prompt versions, and user feedback, all within a single trace.

Enabling it takes a few lines in __main__.py:

langfuse = get_client()

tracer = OITracer(

RestateTracer(trace_api.get_tracer("openinference.openai_agents")),

config=TraceConfig(),

)

set_trace_processors([OpenInferenceTracingProcessor(tracer)])Restate manages the parent span and exports all workflow steps as OpenTelemetry traces, while Langfuse enriches them with AI-specific spans and metadata.

The result is a single trace that covers everything from request intake to final reimbursement:

From observability to quality control and improvement

Traces are not just useful for debugging, but they are also the foundation for improving your agents: iterating on prompts, comparing agent versions to catch regressions, and running evaluations to surface quality issues before users hit them.

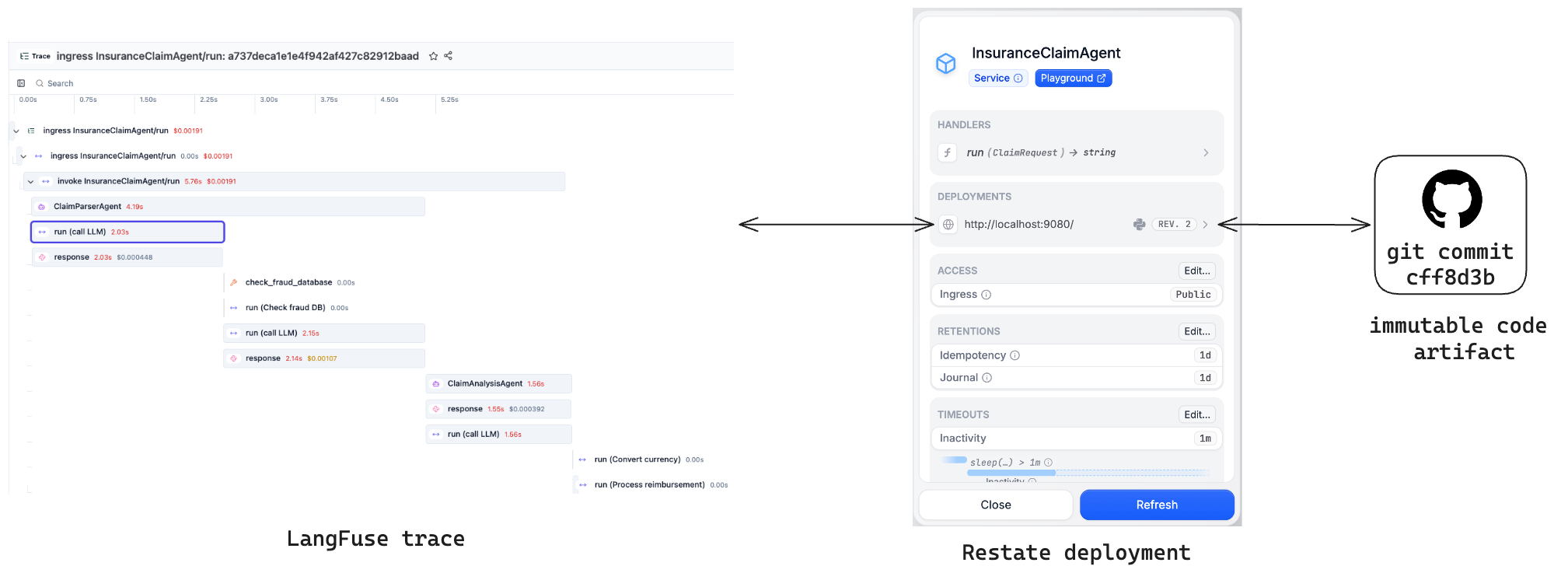

Version-pinned traces to attribute agent behavior

For traces to be meaningful, they must be tied to the exact context in which they were generated: the agent version, prompt version, tool definitions, and execution history.

Restate's versioning model guarantees this by ensuring that each execution runs on a fixed version of the code. When you deploy a new version of your agent, Restate routes new requests to the latest version, while ongoing executions always continue on the version they started with, including retries and resumptions.

This makes every trace attributable to a single, immutable version of the code. You can compare behavior across versions in Langfuse, spot regressions, and decide whether to roll back.

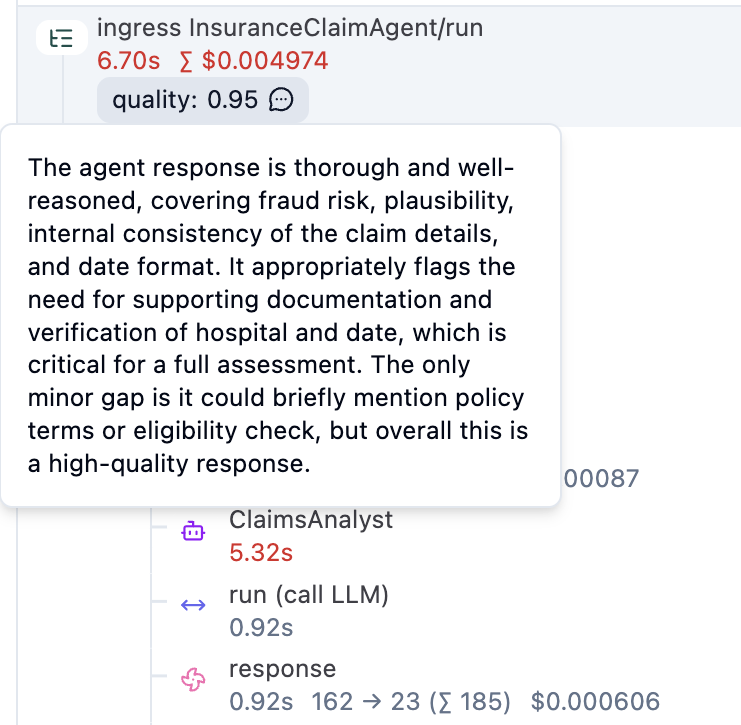

Async evals that don’t block your agent

With version-pinned traces in place, you can go further by evaluating agent outputs automatically. Langfuse supports LLM-as-a-Judge scoring and periodic evaluations, which can be run asynchronously using Restate workflows.

Instead of blocking the main agent execution, evaluations are submitted as background tasks:

ctx.service_send(

evaluate,

arg=EvaluationRequest(

traceparent=ctx.request().attempt_headers.get("traceparent"),

input=claim.model_dump_json(),

output=assessment.model_dump_json(),

),

)Restate acts as both the queue and the orchestrator that drives the eval to completion. The evaluation workflow runs the judge and writes the score back to the original claim trace (full code):

@evaluation_service.handler()

async def evaluate(ctx: restate.Context, req: EvaluationRequest):

# Step 1: Run the LLM judge (durable — retried on failure)

result = await DurableRunner.run(

judge_agent,

f"Evaluate this insurance claim processing:"

f"Claim Input: {req.input}"

f"Agent Output: {req.output}",

)

evaluation: EvaluationScore = result.final_output

# Step 2: Write the score to Langfuse on the original claim trace

async def score_trace() -> None:

langfuse.create_score(

trace_id=req.trace_id(),

name="quality",

value=evaluation.score,

data_type="NUMERIC",

comment=evaluation.reason,

)

langfuse.flush()

await ctx.run_typed("Score trace in Langfuse", score_trace)

Prompt fetches as durable steps

Langfuse also provides version-controlled prompt management. Teams can iterate on prompts in the Langfuse UI, promote tested versions to production. With Restate, each prompt fetch becomes a durable step. Retries reuse the same fetched version, while new executions use the latest.

# Fetch the prompt from Langfuse, durably journaled

langfuse = get_client()

def fetch_prompt() -> str:

prompt = langfuse.get_prompt("claim-agent", type="text")

return prompt.compile()

prompt = await ctx.run_typed("Fetch prompt", fetch_prompt)Running the example

The full code is available on GitHub. Have a look at the Langfuse integration docs to see examples with Google ADK, Pydantic AI, or Restate-only agents.

To run the OpenAI Agents example locally:

Prerequisites: Langfuse account and API key, OpenAI API key, and Restate installed for example with brew:

brew install restatedev/tap/restate-server restatedev/tap/restateDownload the example:

restate example python-openai-agents-examples

cd python-openai-agents-examples/langfuseStart the agent service:

export LANGFUSE_PUBLIC_KEY=pk-lf-...

export LANGFUSE_SECRET_KEY=sk-lf-...

export LANGFUSE_BASE_URL=https://cloud.langfuse.com

export OPENAI_API_KEY=sk-proj-...

uv run --env-file .env .Start Restate and configure it to export traces to Langfuse:

export LANGFUSE_PUBLIC_KEY=pk-lf-...

export LANGFUSE_SECRET_KEY=sk-lf-...

export RESTATE_TRACING_HEADERS__AUTHORIZATION="Basic $(echo -n "${LANGFUSE_PUBLIC_KEY}:${LANGFUSE_SECRET_KEY}" | base64)"

restate-server --tracing-endpoint otlp+https://cloud.langfuse.com/api/public/otel/v1/tracesGo to the Restate UI at http://localhost:9070 and register the service at http://localhost:9080.

Then, click on the handler to go to the playground, and send the default request.

Start building resilient agents

By combining Restate and Langfuse, you get a unified stack for execution reliability, observability, and quality control.

Get started with a fully serverless setup with Restate Cloud, Langfuse Cloud, and your favorite serverless platform to run your agents.

Both Restate and Langfuse are open, free, and available on GitHub (Restate / Langfuse). Star the projects if you like what we're doing and ask any questions you have on Discord or Slack.